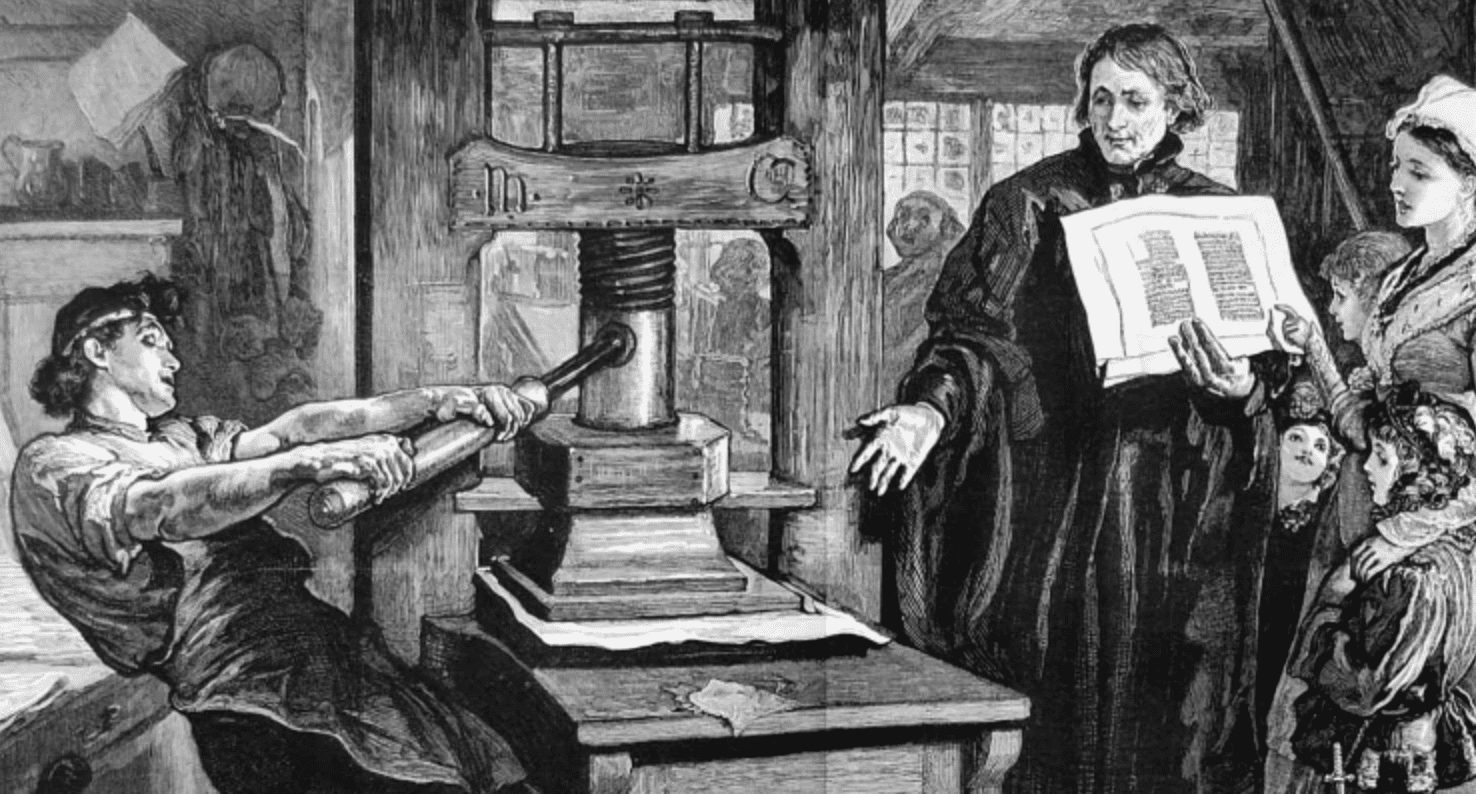

In 1450, Johannes Gutenberg completed the printing press in Mainz. The city was guild-organized, conservative, and built around the controlled transmission of knowledge. The technology was invented there. But it did not flourish there.

Venice, forty years later, became the center of European publishing — not because it had better technology. Venice had the same technology. What Venice had was a different institutional architecture: merchant epistemology, tolerance for probabilistic outcomes, distributed accountability, and the infrastructure to receive what the press was capable of producing.

The press did not transform Mainz. It transformed the institutions that were ready to absorb it. Mainz built the press. Venice built the world the press made possible.

The same structural gap is running through every enterprise AI deployment right now. The model is capable. The demo works. The organization is not ready to receive what the model is capable of producing. The technology has arrived in Mainz, and Mainz is wondering why nothing is changing.

Galileo's problem was not the telescope. The instrument worked. His problem was that the institution he operated inside required certainty before it would update its model of the world. What he offered was probabilistic evidence — compelling, directional, not yet complete. The Church's epistemology demanded proof before permission. Galileo's epistemology worked the other way: permission first, then accumulate the proof in production.

The same dynamic runs through every AI deployment right now. The model produces a recommendation. The human asks: what's the confidence level? The model says eighty-three percent. The human says: not high enough. Come back when it's higher. The decision doesn't move.

"If the recommendation can't be defended with complete data, the decision doesn't move."

"What level of confidence is enough to act? Until the CEO answers that question publicly, every team will convert the AI's output into something that feels like a human decision before they touch it."

The deterministic culture was rational inside a deterministic system. Data was scarce. Errors compounded. You needed proof before commitment. But the agentic system is probabilistic by architecture. You cannot run a probabilistic engine inside a deterministic permission structure and expect it to produce value. The engine will stall at the boundary every time.

Epistemic permission is not about lowering standards. It is about replacing "prove it completely" with "what level of confidence is enough to act?" This is a CEO-level question because the answer must be made publicly — visibly, in a way that releases the organization from its default behavior. Until the CEO does this, every team will convert the AI's output into something that feels like a human decision before they touch it — and the Supervision Burden will remain permanent.

Lorenzo de' Medici did not fund the Platonic Academy because he knew what it would produce. He funded it because he understood that the transformation he wanted to see in Florence required investing in ideas before they could be justified by returns. He was not buying a painting. He was funding the possibility of an entirely new way of organizing knowledge.

The Chief AI Officer model is the modern equivalent of buying a painting. It creates accountability for adoption metrics — rollout percentage, tool usage, pilot count — while insulating the rest of the organization from the decisions that actually matter. When the pilot fails, the AI team absorbs the failure. Middle management self-protects. The pilot stays a pilot.

The Medici patron model does something the AI officer model structurally cannot: it absorbs risk at the level of the principal. When the CEO owns an outcome — names it, budgets it, attaches their reputation to it — middle management stops self-protecting. The pilot becomes a production deployment because failure costs the person who authorized it.

The four-buckets problem is the practical mechanism. Every autonomous AI deployment operates across four information domains. The Chief AI Officer sees the first bucket. The CEO, who owns the operating reality, is the only person who can authorize action in the other three. This is not a delegation question. It is a structural one.

Venice's canal system was not decoration. It was the operating infrastructure that made the commercial model possible. Without the canals, the goods could not move. Without the goods moving, the city could not function. The canal was not the product. The canal was the prerequisite for the product.

The Context Graph is the same thing for an agentic organization. A large language model operating without a context graph is technically impressive and operationally not good enough. It cannot distribute its output at scale because it has no memory of the operating world it is supposed to serve.

Technically impressive. Operationally not good enough. Unable to distribute its output at scale because it has no memory of the operating world it's supposed to serve.

The wiring together of everything the organization actually runs on — CRM records, conversation transcripts, call logs, billing histories, the unwritten rules that leave when someone resigns. The Venetian canals. The absolute prerequisite for everything that follows.

The Context Graph is not a technical project to be handed to the AI team. It is the wiring together of everything the organization actually runs on — CRM records, conversation transcripts, call logs, billing histories, the unwritten rules that leave when someone resigns. It must be funded separately, given its own roadmap, assigned clear ownership, and treated as the infrastructure decision it is. This is the Venetian canal investment. It is not optional, and it is not a feature. It is the prerequisite for everything that follows.

How to Measure

If you are building Venice — not decorating Mainz — three leading indicators will show it before any revenue number does.

The Discontinuity

The Industrial Revolution is the most useful historical comparison, and the most widely misread one. Between 1760 and 1840, the machinery was deployed. Steam engines were installed. Factories were built. Productivity data from that period is modest. The transformation that looks inevitable in retrospect did not show up in economic output until between 1840 and 1880 — after the organizational structures, labor contracts, city infrastructure, legal frameworks, and capital markets had all reorganized around the new operating reality.

The machinery did not produce the transformation. The institutional reorganization did. Robert Solow described a version of this in 1987: "You can see the computer age everywhere but in the productivity statistics." Forty years of computing infrastructure, and the productivity gains were still waiting for the institutional reorganization that would unlock them.

The same discontinuity is running now, at the level of individual organizations. The models are already capable. The gap between capability and economic value creation is institutional, not technical. Every month an organization spends inside Mainz — optimizing adoption metrics, running pilots that stay pilots, waiting for higher confidence scores before moving — is a month it will need to recover when it finally decides to build Venice.

Published on March 14, 2026

← Back to The Agentic Manifesto